A 1.27 fJ/B/transition Digital Compute-in-Memory Architecture for Non-Deterministic Finite Automata Evaluation

Bloom filters for faster regex evaluation

A 1.27 fJ/B/transition Digital Compute-in-Memory Architecture for Non-Deterministic Finite Automata Evaluation Christian Lanius, Florian Freye, and Tobias Gemmeke GLVLSI'25

This paper ostensibly describes an ASIC accelerator for NFA evaluation (e.g., regex matching), but this paper also describes two orthogonal techniques for optimizing NFA evaluation which are applicable to more than just this ASIC.

NFA Primer

Any regular expression can be converted to a non-deterministic finite automaton (NFA). Think of an NFA like a state machine where some inputs can trigger multiple transitions. The state machine is defined by a set of transitions. A transition is an (input symbol, current state, next state) tuple. The non-deterministic naming comes from the fact that multiple tuples may exist with identical (input symbol, current state) values; they only differ in their next state values. This means that an NFA can be in multiple states at once.

One way to evaluate an NFA is to use a bitmap to track the set of active states. For each new input symbol, the set of active states in the bitmap is used to determine which transitions apply. Each activated transition sets one bit in the bitmap used to represent the active states for the next input symbol.

Compute-in-Memory

The hardware described in this paper uses a compute-in-memory (CIM) microarchitecture. A set of columns stores the state machine, with each column storing one transition. This assumes that the transition function is sparse (i.e., the number of transitions used is much lower than the maximum possible). During initialization, the transitions are written into the CIM hardware.

An input symbol is processed by broadcasting it and the current state bitmap to all columns. All columns evaluate whether their transition should be activated. The hardware then iterates (over multiple clock cycles) over all activated transitions and updates the state bitmap for the next input symbol.

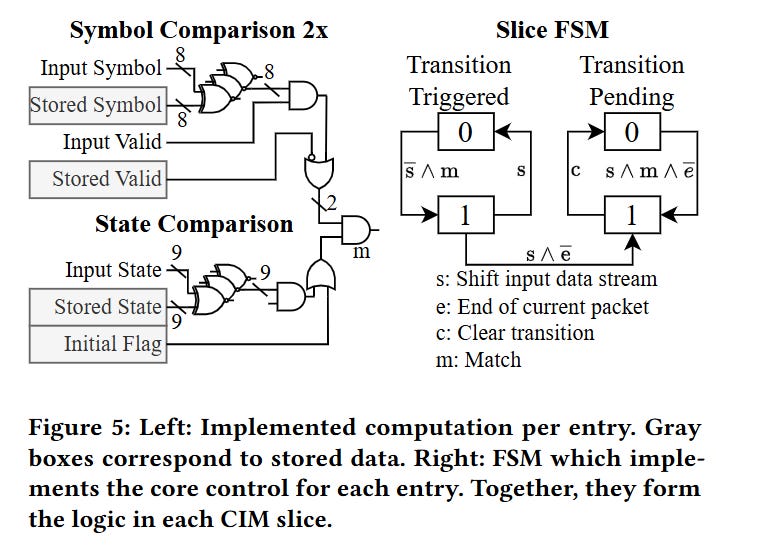

The left side of Fig. 5 illustrates the hardware in each column which compares the input symbol, current state, against the stored tuple:

The algorithm described above processes at most one input symbol per cycle (and it is slower for inputs that activate multiple transitions). The paper contains two tricks for overcoming this limitation.

Cool Trick #1 - Two Symbols Per Cycle

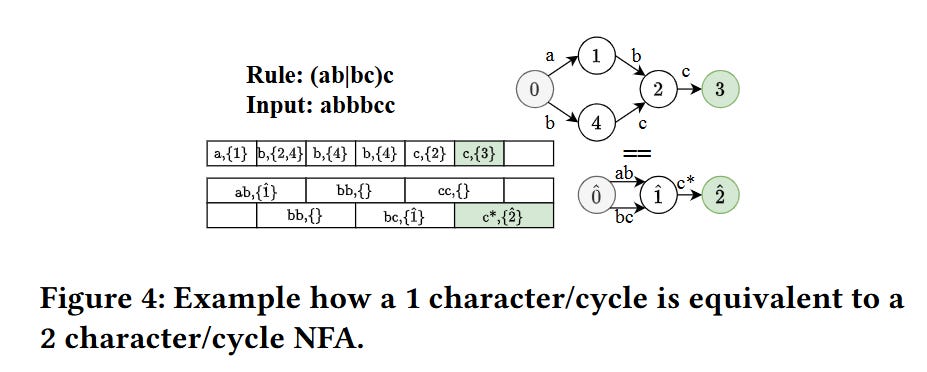

Fig. 4 illustrates how an NFA that accepts one symbol per cycle can be converted into an NFA which accepts two symbols per cycle. For example, rather than consider a and b to be separate symbols, put them together into one mega-symbol: ab. This is feasible as long as your NFA implementation isn’t too sensitive to the number of bits per symbol.

Cool Trick #2 - Bloom Filter

The target application for this hardware is monitoring network traffic for threats (e.g., Snort). A key observation is that most inputs (network packets) do not produce a match, so it is reasonable to assume that most of the time the NFA will be in the initial state, and most input symbols will not trigger any transitions.

If that assumption holds, then a bloom filter can be used to quickly skip many input symbols before they even reach the core NFA evaluation hardware.

The bloom filter is built when the NFA transition function changes. To build the bloom filter, iterate over each transition for which (current state == initial state) holds. For each such transition, compute a hash of the input symbol, decompose the hashed value into N indices, and set the corresponding N bits in the bloom filter.

To test an input symbol against the bloom filter, hash the input symbol, decompose the hashed value into N indices, and check to see if all of the N corresponding bits are set in the bloom filter. If any bit is not set, then the input symbol does not trigger a transition from the initial state. When that symbol finally arrives at the NFA hardware, it can be dropped if the NFA is in the initial state.

Results

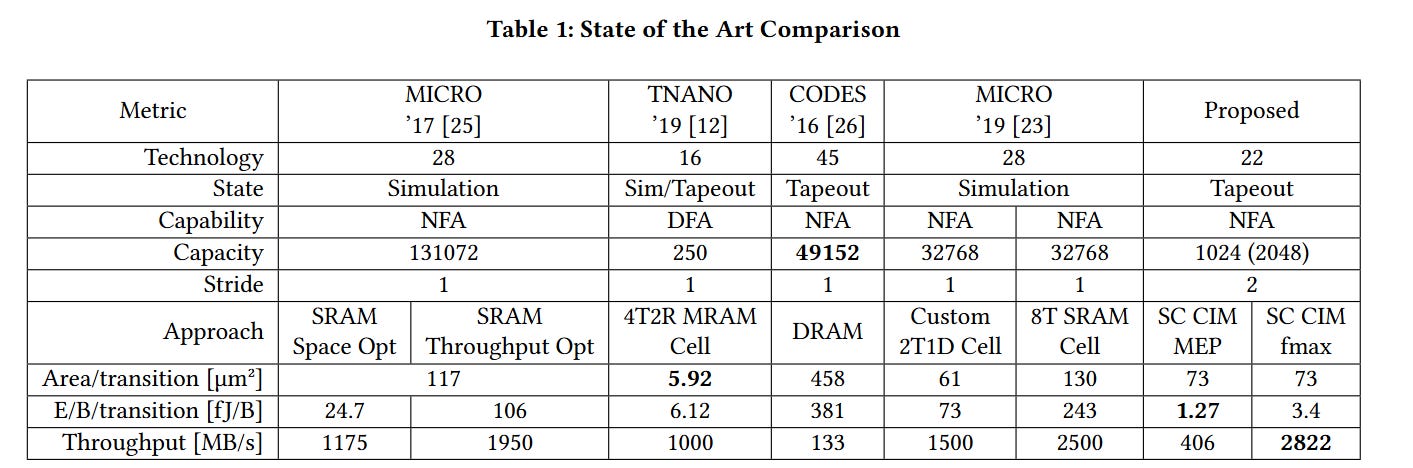

Table 1 compares PPA results against other published NFA accelerators. It is a bit apples-to-oranges as the various designs target different technology nodes. The metric that stands out is the low power consumption of this design.

Dangling Pointers

I wonder if the bloom filter trick can be extended. For example, rather than assuming the NFA will always be in the initial state, the hardware could dynamically compute which states are the most frequent and then use bloom filters to drop input symbols which cannot trigger any transitions from those states.

Yeah, that does seem to be the crux of it. I think Fig. 9 says that if you remove the bloom filter, power increases by 3x.

I wonder how much of the low power metric is due to the Bloom filter.